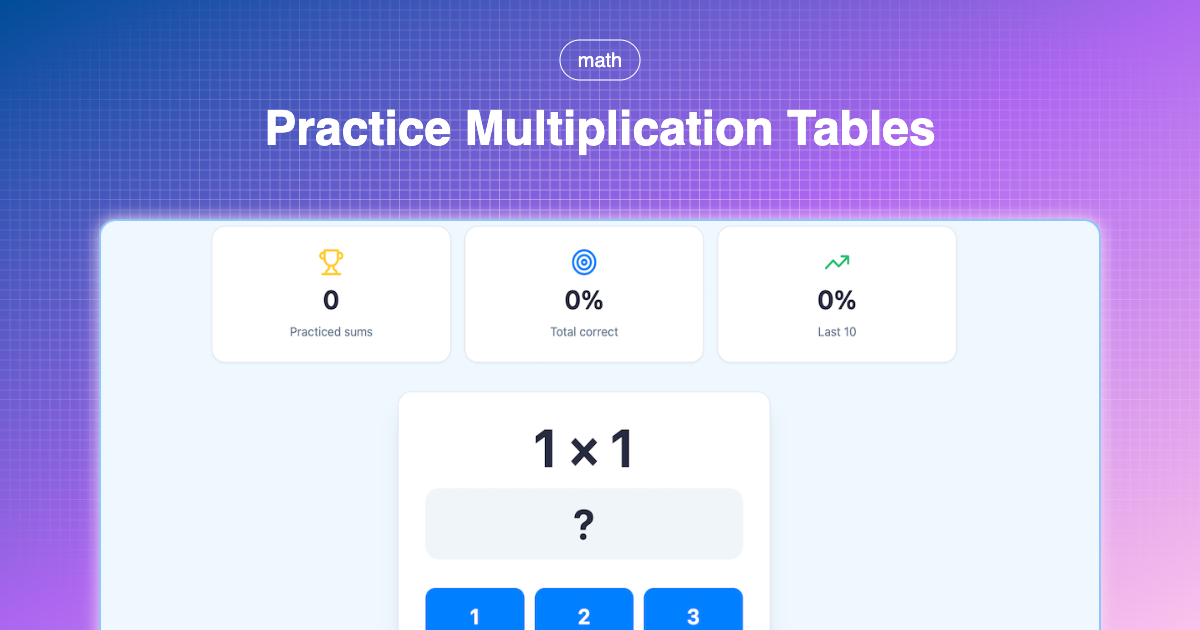

TL;DR: wget -r -E -k -p -np -nc --random-wait http://example.com'

There are cases when you want to download a website as an offline copy. For me it’s usually because it’s a site I want to be able to use while I’m offline. That happens either by choice or because of planes that don’t have Wi-Fi available (Gen-1 planes ;)).

When we download a website for local offline use, we need it in full. Not just the html of a single page, but all required links, sub-pages, etc. Of course any cloud-based functionality will not work, but especially documentation is usually mostly static.

wget is (of course) the tool for the job.

To install wget on your Mac (if you don’t have it yet), you can use HomeBrew in a Terminal window:

$ homebrew install wget

Just as with all terminal commands, you can get an overview of the (very extensive) list of options using:

wget --help

In general when you want to use wget, you just use:

$ wget example.com

But that only gets you so far. You will now have the html of the root page of that website. But you can’t really use that to view the site on your laptop. You are missing all support CSS files, images, JavaScript, etc. In addition, all links are still in the old ‘context’ of the online website.

So we’ll use some options to get wget to do what we want.

$ wget -r -E -k -p -np -nc --random-wait http://example.com

Let’s go through those different command line options to see what they do:

-ror--recursive

Make sure wget uses recursion to download sub-pages of the requested site.

-Eor--adjust-extension

Let wget change the local file extensions to match the type of data it received (based on the HTML Content-Type header)

-kor--convert-links

Have wget change the references in the page to local references, for offline use.

-por--page-requisites

Don’t just follow links within the same domain, but also download everything that is used by a page (even if it’s outside of the current domain).

-npor--no-parent

Restrict wget to the provided sub-folder. Don’t let it escape to parent or sibling pages.

-ncor--no-clobber

Don’t retrieve files multiple times

--random-wait

Have wget add some intervals between downloading different files in order to not bash the host server.